Roblox is improving its safety measures for kids’ chats by using AI. This decision comes as the platform adjusts to a growing audience, including older users. There are billions of chat messages sent each day and a wide range of user-created content. So, Roblox faces a real challenge in moderating the platform to guarantee a safe and friendly environment for players.

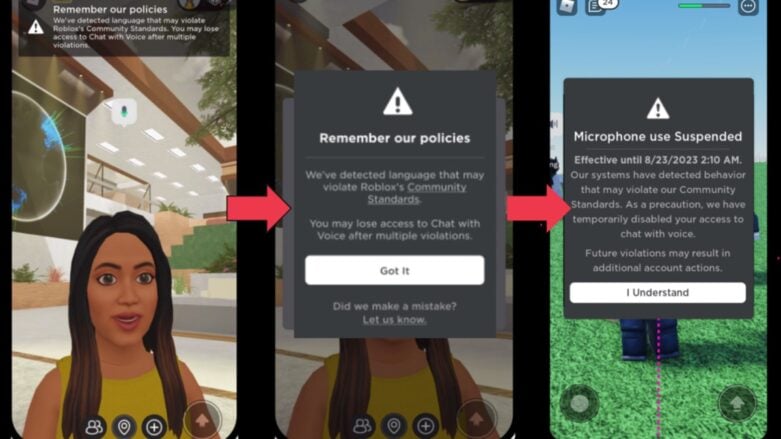

Roblox’s safety approach is centered on a combination of artificial intelligence and human knowledge. The company’s text filter, refined for Roblox-specific language, can now identify policy-violating content in all supported languages. This is particularly important considering the recent launch of real-time AI chat translations. Additionally, an original in-house system moderates voice communications in real-time, flagging inappropriate speech within seconds. To complement this, real-time notifications inform users when their speech approaches violate Roblox’s guidelines, reducing abuse reports by over 50%.

Roblox carefully moderates visual content using computer vision technology to evaluate user-created avatars, accessories, and 3D models. Items can be automatically approved, flagged for human review, or rejected when necessary. This process also examines the individual parts of a model to prevent potentially harmful combinations from being overlooked.

This is just one of the many things Roblox has done. They’ve supported a bill in California for child safety, which is a big deal. However, even with the heat on the pay terms, they don’t think they’re exploiting children with their pay methods.

The company is creating multimodal AI systems that are trained on various kinds of data to improve accuracy. This technology helps Roblox identify how different content elements could lead to harmful situations that a unimodal AI might not catch. Essentially, it is a bunch of AIs working together instead of a single AI expected to handle everything. For instance, an innocent-looking avatar could cause issues when paired with a harassing text message. A good example is when a Netflix logo is used as a profile image for a TikTok user, and then the six-letter username is a banned word that starts with N but doesn’t use an N (because the profile image does that for them).

While using automation is important for handling a large amount of moderation work, Roblox stresses the ongoing need for human involvement. Moderators adjust AI models, deal with complicated situations, and guarantee overall precision. Specifically for serious investigations, such as those related to extremism or child endangerment, specialized internal teams take action to identify and handle the bad people.

Comments